The Danger of AI Hallucinations and How Businesses Should Handle It

Everyone is excited about AI productivity gains. Fair enough. The gains are real.

But if you are using AI in serious business workflows, there is one problem you cannot treat as a side note: hallucination.

A model can respond with full confidence, clean formatting, and a professional tone while still being wrong. That is exactly what makes this risk dangerous. It does not look like an error when you first read it.

In this article I will break down what hallucination is, where it creates business risk, how to reduce it, and why teams that manage this well actually move faster than teams that ignore it.

Key message: Hallucination is not just a model quality issue. It is an operational risk issue.

What is an AI hallucination?

A hallucination is when an AI model generates content that sounds plausible but is factually incorrect, fabricated, or unsupported by real data.

This can take different forms:

- Inventing sources, links, quotes, or legal references

- Returning outdated facts as if they are current

- Misstating numbers, entities, or timelines

- Filling gaps with assumptions when context is missing

The key point is simple: hallucination is not a "bug you can patch once." It is a behavior pattern of probabilistic models. You need architecture and process around it.

Real examples that hurt businesses

The easiest way to underestimate hallucinations is to think only about consumer chat use. In enterprise environments, the impact is much bigger because output often drives decisions and operations.

- Imagine a customer support assistant that confidently explains the wrong refund policy. One wrong response can be fixed. Ten thousand wrong responses become operational debt, escalations, and churn.

- Or take a sales enablement assistant that invents product capabilities in proposal text. It may help a team move faster this week, but it creates legal and trust problems once contracts are signed on incorrect assumptions.

- In finance and operations, the risk is even more direct. If an AI summary tool misstates a KPI trend or attributes the wrong root cause, leadership decisions can be made on false signals.

- In regulated sectors, hallucinated compliance advice is not just inaccurate, it can become a legal event.

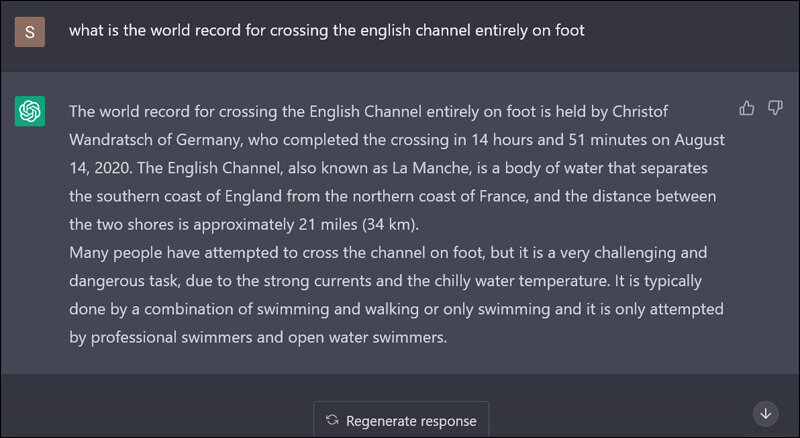

A simple hallucination example

Below is a classic hallucination pattern: the model answers confidently, but the answer is impossible in the real world.

The question asks about crossing the English Channel entirely on foot. The model still returns a precise name, date, and duration, even though the premise is physically wrong. This is exactly why confidence is not the same as correctness.

Why hallucinations happen

Most business stakeholders ask: "Why would a smart model make things up?"

Because the model is optimized to generate the most probable next token sequence, not to guarantee factual correctness in your specific business context.

When context is weak, ambiguous, or missing, the model still tries to complete the task. If retrieval quality is poor, if prompts are vague, or if tools are unavailable, the model often fills the gap with plausible language.

That is why this is not just a model problem. It is a system problem: data quality, retrieval, prompt design, guardrails, and human review all matter.

Practical tips to reduce hallucinations

You do not eliminate hallucinations fully. You reduce frequency and impact to acceptable business levels.

- Start with grounded generation. Use retrieval-augmented flows so answers are tied to approved internal sources, not only to model memory.

- Force citation behavior where relevant. If the model cannot point to source documents, it should explicitly say it is uncertain instead of inventing certainty.

- Design prompts for bounded behavior. Ask the model to answer only from provided context and to refuse when evidence is insufficient.

- Use tool calling for factual operations. For prices, account status, inventory, policy lookups, and transaction data, call systems of record instead of asking the model to "remember."

- Implement confidence and risk routing. Low-confidence or high-impact outputs should go through human review before being sent externally.

- Measure hallucination rates as an operational metric. Treat it like any other quality KPI with test sets, regression checks, and release gates.

- Separate use cases by risk class. A creative brainstorming assistant can tolerate far more uncertainty than a compliance or financial assistant.

At Blits, we mitigate this risk with guardrails for agents and dedicated validation layers for Agentic AI workflows before high-impact actions are executed.

Why this gets riskier in agentic and automated AI

In a normal chat interface, a hallucination usually becomes a bad answer. In agentic or automated AI, the same hallucination can become a bad action.

If an agent misreads policy context and still executes a workflow, it can send the wrong customer communication, trigger incorrect refunds, update records with false data, or escalate the wrong cases. The issue is no longer just content quality, but operational impact.

This risk grows when systems are fully autonomous, connected to multiple tools, and allowed to run at high speed. Small errors can cascade across APIs and business processes before a human notices.

That is why agentic systems need stronger controls than standard assistants: least-privilege tool access, approval gates for high-impact actions, sandbox testing before production rollout, and full audit trails for every decision and tool call.

The more automated the system, the more important it is to design for safe failure. Agents should pause, ask for clarification, or escalate to a human when confidence drops, instead of "powering through" uncertainty.

In agentic systems, hallucination risk shifts from wrong words to wrong actions.

At Blits, this is exactly why we implement guardrails for agents and validations for Agentic AI flows: to enforce boundaries, check outputs, and reduce the chance that uncertain model behavior turns into a real operational mistake.

The business value of handling hallucinations correctly

Some teams see hallucination control as a brake on innovation. In reality, it is the opposite.

- Make outputs reliable. Business teams start trusting automation.

- Build trust. Adoption increases across teams and channels.

- Measure quality. You scale use cases without multiplying risk and spend less time firefighting.

- Clarify governance. Procurement and legal move faster because risk posture is explicit.

- Design for model switching. You can adopt better models quickly without rebuilding from scratch.

In short, hallucination management is not only about avoiding mistakes. It is a competitive advantage in enterprise AI execution.

A practical operating principle

The question is not "Can hallucinations happen?" They can.

The better question is: what is your acceptable error threshold per use case, and what controls ensure you stay below it in production?

Teams that answer that question clearly build AI systems that are not only impressive in demos, but dependable in real business operations.

Related Articles

Introducing the Agentic AI Studio for Enterprises

Agentic Pay and the Moment AI Was Allowed to Spend Money

Stay Updated

Get the latest insights on conversational AI, enterprise automation, and customer experience delivered to your inbox

No spam, unsubscribe at any time